Stamford, Texas, lost its hospital on a Monday back in July of 2018. The emergency room closed at five o’clock, the inpatient beds emptied, and the building on Columbia Street went still. Stamford Memorial had been running on fumes for years; its average daily inpatient census dropped from 2.6 in 2007 to 0.48 by the first half of 2018, which made it fall well below the Medicare threshold that allowed them to keep the lights on. The CEO called the closure “an end of an era” and promised the hospital would maintain whatever primary care services it could. That turned out to be a single, bare-bones clinic, open only on weekdays, eight to five, and closed for lunch.¹

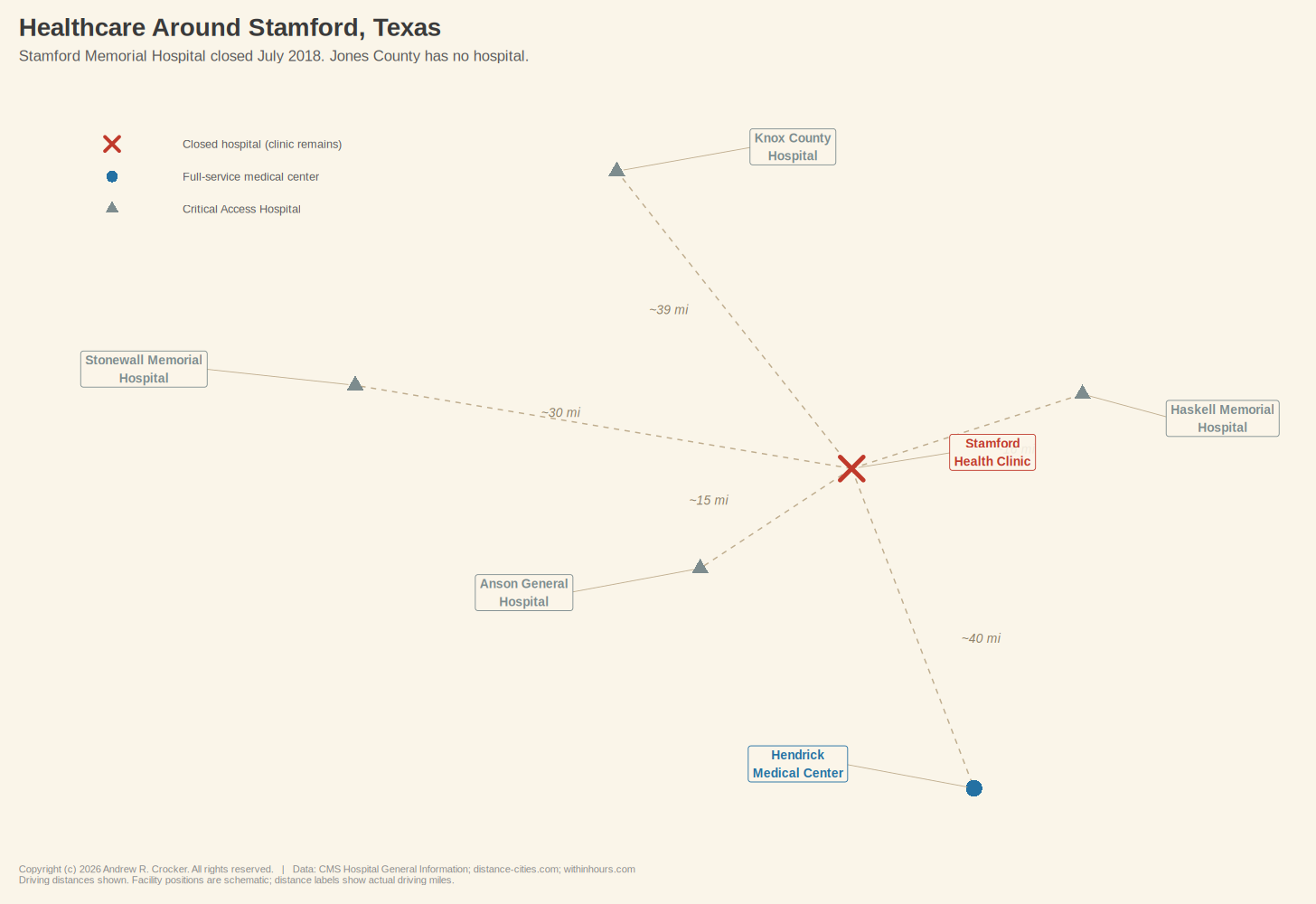

Nearly 3,000 people live in this small rural town, which is situated on the border of Jones and Haskell Counties in the rolling mesquite country west of Abilene. The population has fallen 19% since 2000, which is a steep enough decline that when the hospital closed, Hamlin Memorial Hospital in the same county followed a year later. Jones County now has no hospital at all. The nearest emergency room is roughly 40 miles south at Hendrick Medical Center in Abilene, a drive that takes about 45 minutes if you manage to catch the lights on Highway 83, longer if you do not.

Like many residents of Stamford, Dale Hammond works the oil fields west of Abilene. He has roughnecked on the same rigs for twenty-two years, knows every road out to the patch well enough to drive them half-asleep, and has driven them that way more than once. He is 54 now, and three years ago the clinic in town told him he had Type 2 diabetes. Ever since then, his A1C has crept steadily upward into dangerous territory.

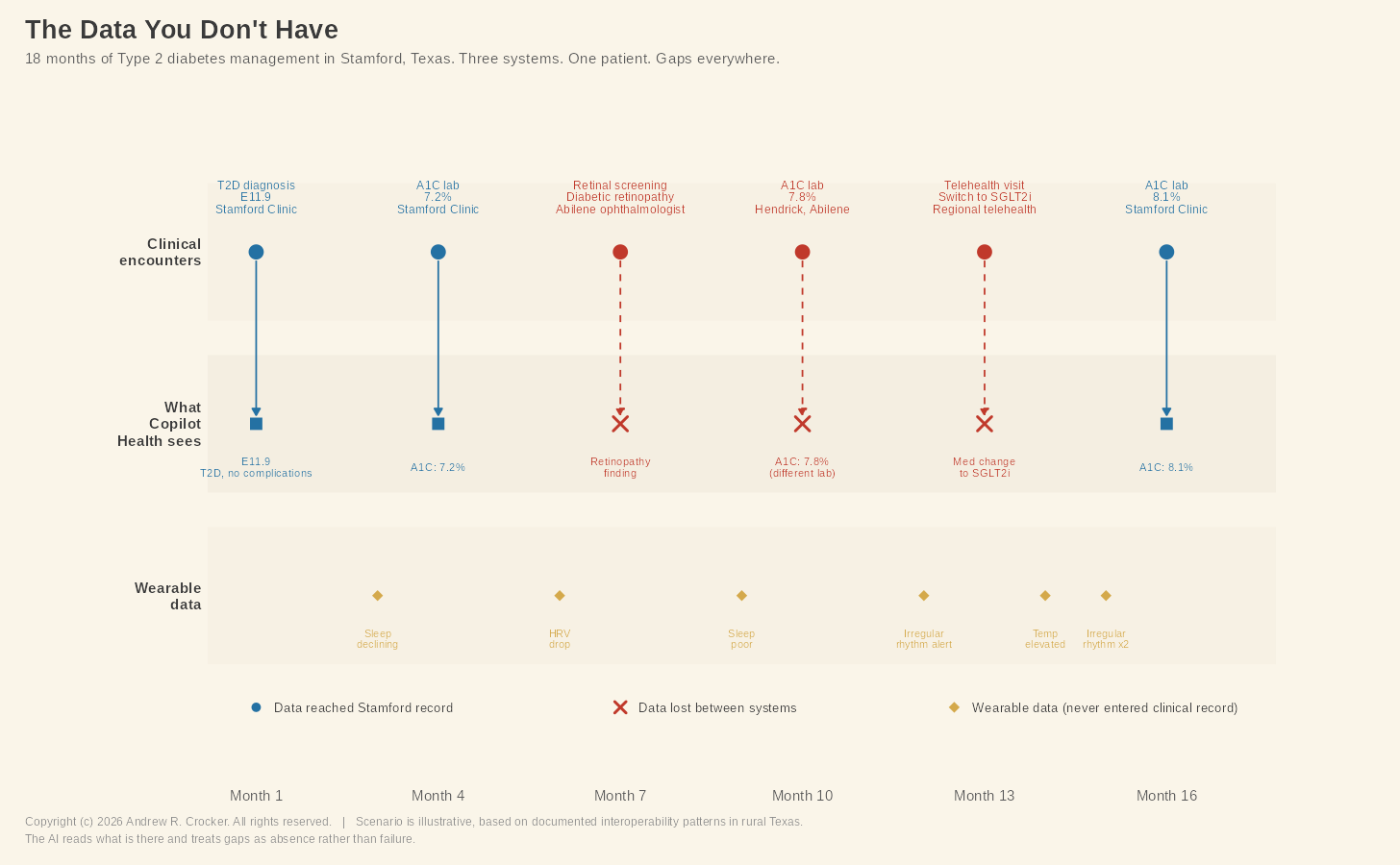

Six months ago, he drove to Abilene for a retinal screening that found early signs of diabetic retinopathy, a complication his Stamford provider may or may not know about, depending on whether the ophthalmologist’s office sent the results back and if anyone at the clinic filed them. Two months ago, his medication was switched from metformin to an SGLT2 inhibitor, but that happened through a regional telehealth service whose records live in a system the Stamford clinic cannot access. Even since then, his blood sugar has been erratic.

Tonight is a Tuesday, and Dale feels something is wrong. His head hurts and he is dizzy. The clinic closed seven hours ago, and Abilene is too far away down a two-lane road in the dark.

Now, Dale is a fictional character I made up, but the scenario he is living through is real is and is exactly what Microsoft built Copilot Health to meet.

On March 12, 2026, at the HIMSS conference in Las Vegas, Microsoft announced Copilot Health, a dedicated space within its AI assistant where users connect their personal health data and the AI turns it into what the company calls “a coherent story.”² Connecting to data from more than 50 wearable devices, the platform pulls electronic health records from over 50,000 U.S. hospitals and provider organizations through a service called HealthEx.²,³ Lab results get fed to it through a separate integration called Function.³

More than 230 physicians from 24 countries contributed to its development.⁴ Dominic King, Microsoft’s VP of health at Microsoft AI, told everyone at a press briefing that the product could identify when a patient’s blood pressure is “trending in the wrong direction.”⁴ The AI launched with a waitlist for U.S. adults, available in English only, and with a subscription model planned once it leaves early access.²

So imagine for a moment that our friend Dale has Copilot Health on his phone. It pulls his wearable data and notices weeks of deteriorating sleep. Connecting to his health records from the Stamford clinic and from Hendrick Medical Center, it flags the upward trend in his blood glucose readings and suggests questions to bring with him to his next appointment with his PCP, while pointing him toward an endocrinologist within driving distance who accepts his insurance.⁴

Microsoft says the product is not intended to replace his doctor, rather the idea behind it is to make every minute Dale has with them count more.

For someone in Dale’s position, that pitch is not cynical. The need is as genuine as his isolation, and the desire for something—anything—that helps make sense of a confusing health picture at ten o’clock on a Tuesday night when he’s battling a rip-roaring headache is entirely understandable. Fifty million people ask Microsoft’s consumer products health questions every day.⁴ So, the demand is there, it’s just that the system is not ready for it.

There is one wrinkle in this scenario worth getting into before we go any further. As stated above, Microsoft’s announcement showcases integration with a number of wearable devices, notably Apple Watch, Oura Ring, and Fitbit. An Apple Watch Series 11 (with full health sensors) starts around $400. An Oura Ring 4 costs roughly $500. Owning these both puts a person almost a thousand bucks in the hole for hardware before they even open Copilot Health.

But the main point is that the people who would most need an AI health companion the most are among the least likely to own the gear that makes it the most useful. Most Dales in rural Texas would not own an Oura ring. Microsoft has said it wants to bring this service to billions of people who struggle to access reliable medical advice.⁴ By design, the Copilot Health needs its customers to throw money into hardware and invest in stable connectivity that much of rural Texas still does not have. The aspiration and the architecture are shooting off not quite in the right directions.

Since Copilot Health is not yet available to the public, what follows is not a product review. We know what this technology can do in a controlled environment, but nobody knows how it will behave in the wild.

OpenAI launched ChatGPT Health in January 2026.⁵ Anthropic, the company behind Claude, followed days later with Claude for Healthcare. Amazon expanded its Health AI from One Medical to the broader public on March 10.⁵ None of these products have been in the wilds of America long enough to generate meaningful outcome data. Any one of them might turn out to be amazing, or they might fumble in ways nobody anticipated. The most honest answer right now about how AI health tools will help people is: Well, we do not know…yet.

What we can evaluate right now is the terrain these products are about to be unleashed into. The clinical evaluations tested the engine, but nobody tested the fuel. The failures underneath these products are not whimsical, but are well-documented problems that have been around for decades since before dreams of AI integration with health were sparks in their developer’s eyes. Copilot Health did not create them. Whether it can function on top of these problems is the big question.

The terrain we’re looking at has three features.

The first concerns who is writing the records and who is reading them. Copilot Health is not just rolling out to patients. Microsoft is simultaneously pushing Dragon Copilot, its clinical AI documentation tool, into the hospitals and clinics where those patients’ records originate. At HIMSS 2026, Microsoft announced a Rural Health Resiliency Program in partnership with Pivot Point Consulting, offering Dragon Copilot at a 60% discount to Critical Access Hospitals (CAH), Rural Emergency Hospitals, and Rural Community Hospitals.⁶ Laura Kreofsky, Microsoft’s Director of Rural Health Resiliency, described the initiative as an equity issue, arguing that rural providers deserve AI that works within the systems they already have.⁶

That is absolutely true, and the discount is meaningful enough for facilities that operate on such thin margins to jump onto. The structural problem, though, is that Microsoft is positioning itself on both ends of the patient data pipeline. Dragon Copilot shapes the clinical documentation at the point of care, and Copilot Health interprets that documentation back to the patient on the consumer side. One company both authors the record and reads it back.

There’s a verification layer that should come between those endpoints, but, for the most part, it’s non-existent in the facilities where these products are being deployed.

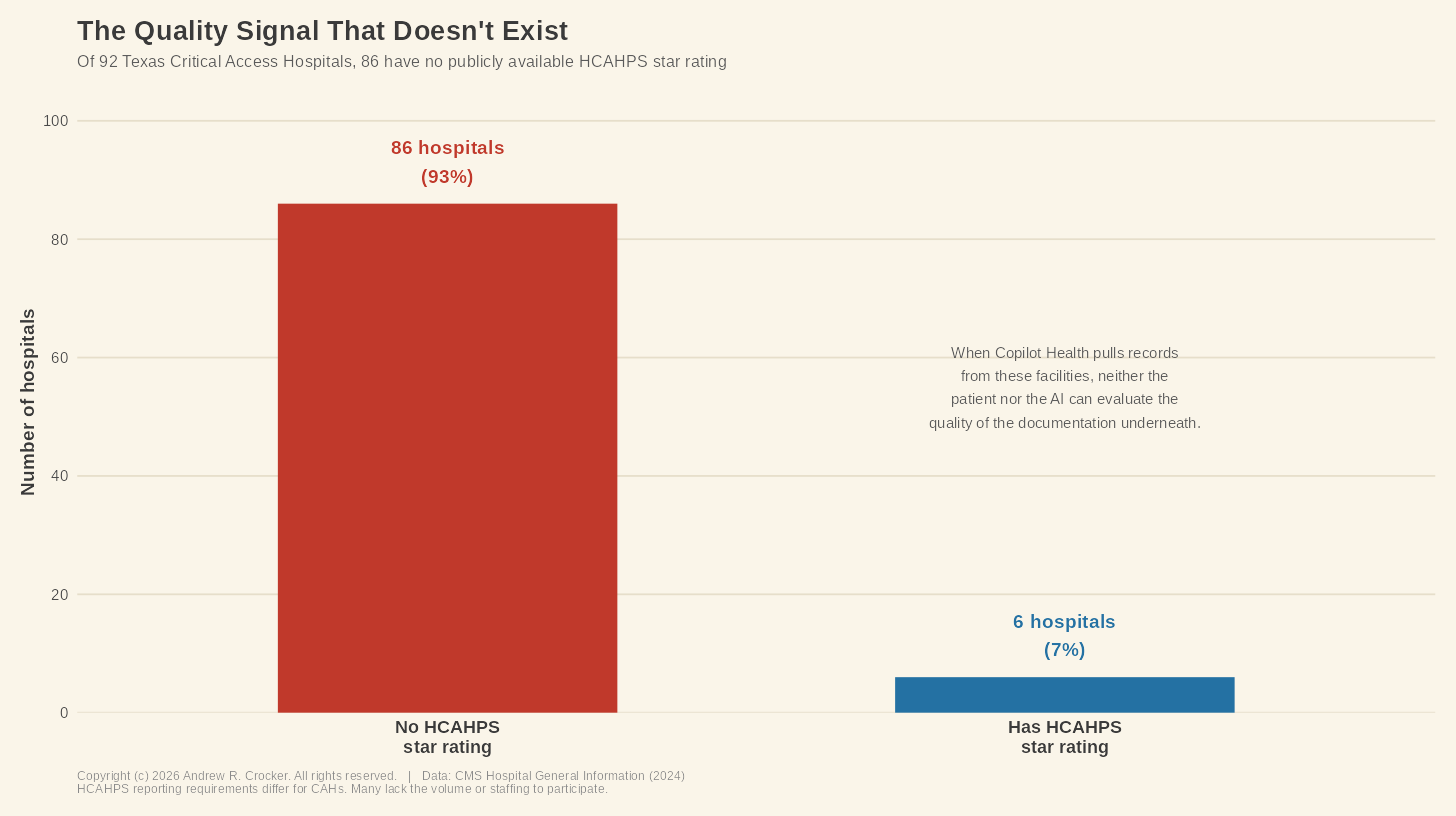

Texas has 92 Critical Access Hospitals. Nationally there are roughly 1,360, serving approximately 60 million Americans in rural areas.10 These are the facilities that serve as the healthcare backbone for towns like Stamford and the tiny communities surrounding it. Of those 92 hospitals, 86 do not have HCAHPS star ratings, which is the patient experience and quality measure that CMS publishes.

This problem is not the hospital’s fault. HCAHPS reporting requirements differ for CAHs, and many don’t have the volume or staffing to participate in it. The result is that there’s no independent, publicly available quality signal for the vast majority of rural Texas hospitals. When Copilot Health pulls a record from one of these facilities and weaves it into a patient’s “coherent story,” neither the patient nor the AI has a way to evaluate the quality of the underlying documentation.

Consider what that means for poor Dale. His diabetes diagnosis came from a clinic that operates without dedicated health information management staff, where coding specificity is constrained by what a facility that small can sustain. The difference between E11.9, Type 2 diabetes without complications, and E11.319, Type 2 diabetes with unspecified diabetic retinopathy, is not something to sniff at. The distance between the two changes what an AI comes up with and what it flags as urgent.

If the retinopathy finding from the ophthalmologist never made it back to the Stamford clinic’s record, the primary care code may still read E11.9, and an AI reading that code has no reason to suspect otherwise. Dragon Copilot, if it’s at the clinic, might improve documentation going forward, but the records that already exist are another matter.

None of this means the AI will fail. The technology might get through these gaps without incident. The point is that nobody knows, because the product has not been tested against records generated in these conditions. The physicians who contributed to the program’s development evaluated the AI’s clinical reasoning.⁴ They were not evaluating the completeness and coding quality of documentation from a 25-bed CAH in West Texas. The engine was tested. The fuel was not.

The second feature of the terrain concerns what happens to health data once it leaves the clinical system.

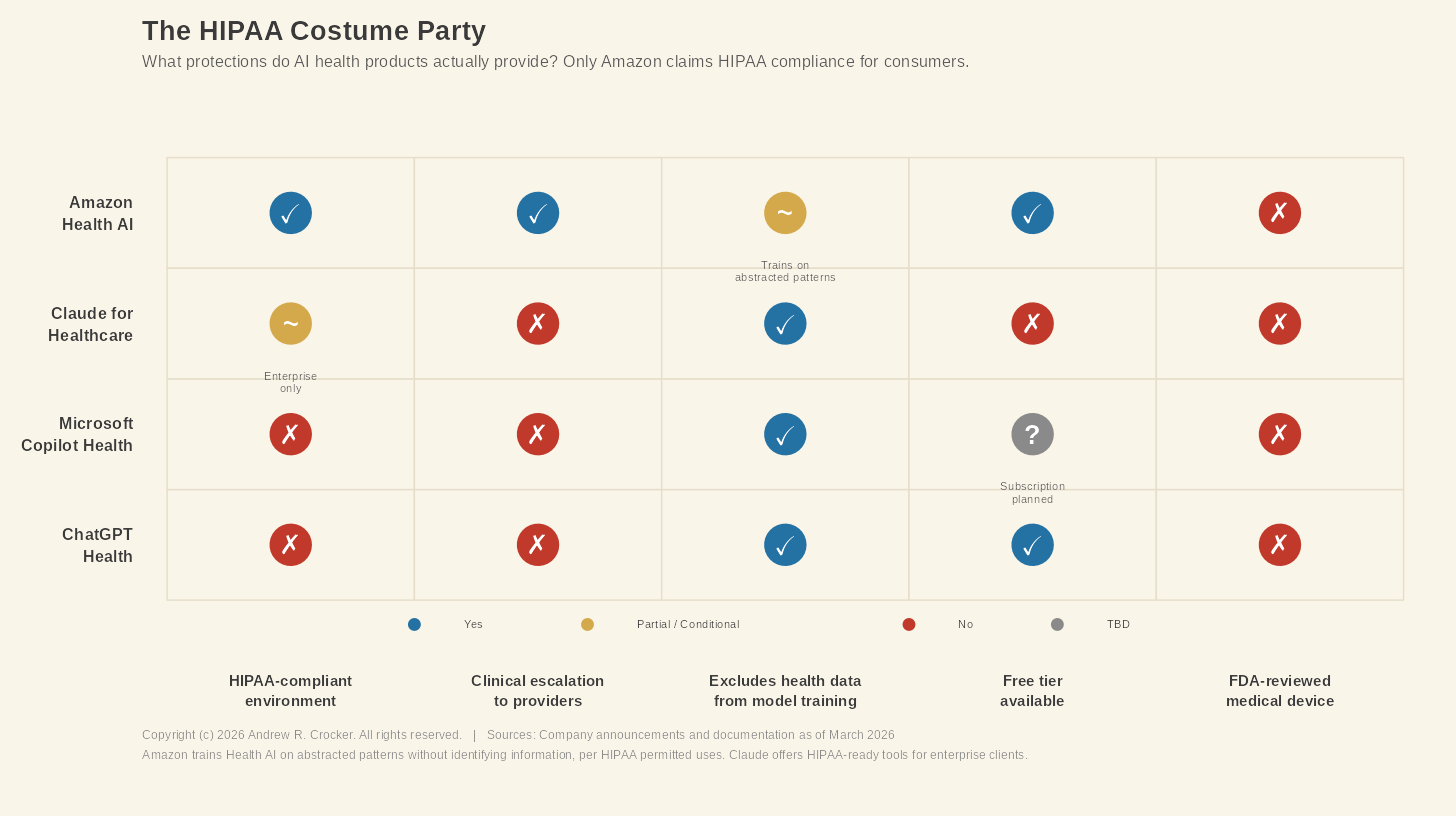

When Dale connects his health records to Copilot Health, he probably believes his data has the same protections inside Microsoft’s app that it has inside the hospital’s electronic health record. He’s right to think that, but it’s not the case. HIPAA, the federal law governing the privacy and security of health information, applies to covered entities, like hospitals, clinics, insurers, and their business associates. Consumer health apps fall outside HIPAA, and HHS has confirmed that once a patient directs a covered entity to send records to a third-party app, the records shed themselves of their HIPAA protections.8

What Dale authorizes HealthEx to pull enters into a regulatory dimension most patients have no way to evaluate. Senator Bill Cassidy introduced the Health Information Privacy Reform Act in November 2025 specifically to extend HIPAA-like protections to apps and wearables, which suggests Congress understands the issue is real.⁹ The bill has not passed, so the issue remains.

Still, Microsoft has made some real commitments here. Copilot Health conversations are kept separate from the rest of Microsoft’s AI products, the data is encrypted, and none of it feeds back into model training. Users can disconnect their records or delete their data whenever they want. These are meaningful protections.

But they are also voluntary. Microsoft adopted them because it chose to. No federal law requires them to, and no regulator enforces them. That difference matters more than it might seem, because the competitive landscape is already heavily divided. Amazon’s Health AI operates under what the company describes as a HIPAA-compliant environment, a claim neither Microsoft nor OpenAI has made for their consumer products. The protections Dale is relying on are a terms-of-service commitment, not a legal right. He has no clear idea to which protections are regulatory, which ones are contractual, and which are simply wishful thinking.

The regulatory gap widened further in early 2026, when the FDA relaxed its rules around wearable devices and clinical decision support software.⁷ The revised policy likely means that more AI-enabled clinical decision support tools can reach the market without FDA review, classified as non-device software rather than regulated medical devices.⁷ Copilot Health is not intended to diagnose, treat, or prevent disease, according to its disclaimer, and that disclaimer is also, conveniently, the way that keeps the product outside the FDA’s regulatory perimeter. Whether “non-device software” is a reasonable classification for a tool that synthesizes a patient’s complete health history and surfaces clinical patterns is a question the revised guidance leaves totally unanswered.

The third terrain feature is deceitfully simple, which makes it the hardest to fix. In medical coding, there is a saying: if it’s not documented, it didn’t happen. This means you cannot code what isn’t in the documentation. No documentation, no diagnosis. No diagnosis, no history. For Copilot Health, that slashes deeper than anyone in the product announcement seemed to notice. Simply put, the AI cannot synthesize what the record never captured.

Copilot Health is built on aggregation, which means it pulls a patient’s health data that’s scattered about into one place and then uses its positronic brain to make sense of it. The synthesis is only as good as what feeds it. In places like Stamford, what feeds it is often very incomplete.

Let’s return to Dale one last time. He has early diabetic retinopathy, which was documented in the ophthalmologist’s EHR system. That system is not the same one used by the Stamford clinic. For an HIM professional working in rural Texas, what comes next is not an outlier case but the baseline. Getting that finding into Dale’s primary care record depends on a chain of handoffs that a facility with minimal administrative staff cannot always guarantee. Dale’s telehealth medication change faces the same problem. His medication list in the primary care record may still show metformin. When Copilot Health reads that record, it reads what is there, not what is supposed to be there.

In another example, three weeks ago, Dale’s Apple Watch flagged an irregular heart rhythm. The watch generated an alert, which he saw on his phone, raised is eyebrows at it, and continued on with his work. That alert lives in Apple Health, and if Dale connects Apple Health to Copilot Health, the AI will see it. What the AI cannot do is tell the difference between clinically significant atrial fibrillation and noise from a consumer device. In a diabetic patient, that sort of thing their stroke risk calculation entirely. No provider ever saw the alert, and no code was ever assigned. The AI is working without a clinician’s judgment, on a signal that never entered the clinical record.

What these gaps add up to is a health record that is fragmented, partially accessible, and supplemented by wearable data that is not the same thing as a clinical measurement. Copilot Health promises to turn all that into a coherent story. The question is whether that coherence is real or manufactured. A narrative stitched together from incomplete fragments, presented neatly and with confidence, can be less useful than the fragments themselves, because it replaces the patient’s awareness that the picture is incomplete with the AI’s assurance that it isn’t.

This is not a criticism aimed specifically at Microsoft. Every AI health product entering the market faces the same problem, because the health data infrastructure was not built for what these products need. In rural communities the issues run deeper, where leaner HIM staff and thinner resources mean the record is least reliable in exactly the places where reliability matters most.

Copilot Health might work wonderfully for a Dale in Austin whose records sit happily inside a single large health system, where his wearables stream clean personal data and a well-staffed HIM department ensures the documentation is accurate. That Dale exists. Rural Dale also exists and his records were generated under different conditions. The AI reading them will not know what is missing, nor let anyone know about it. The coherent story will feel complete because the AI presents it that way.

Don’t get me wrong. I believe this technology is as wonderful as it is new. But, we do not yet know what it will do in the wild. What we do know is what the data looks like in the places where these products are needed most. Again, the engine may be extraordinary, but the fuel is not.

As these products move from waitlists to widespread use, the expertise that matters most is not in artificial intelligence. The people who matter are the HIM professionals who understand what is in those records and, more importantly, what is not. The AI can read the record. Somebody still has to make sure the record is worth reading.

That job did not become less important when Microsoft announced Copilot Health. AHIMA’s own workforce survey found that two-thirds of health information professionals reported persistent staffing shortages in their workplaces, which means the people most needed to get the record right are in short supply too.¹¹

Whether anyone is checking the fuel is the wrong question.

Nobody is.

References

- DeFoore R. Stamford Healthcare System announces new healthcare service design [press release]. Big Country Homepage. July 2, 2018. https://www.bigcountryhomepage.com/news/local-news/stamford-er-closing-during-hospital-redesign/1279566673/ — Stamford Healthcare System closing ER, discontinuing inpatient care. KTXS. July 2, 2018. https://ktxs.com/news/big-country/stamford-healthcare-system-closing-er-discontinuing-inpatient-care — Stamford Hospital District. Hours and contact. https://www.stamfordhosp.com/

- King D. Microsoft launches Copilot Health at HIMSS 2026. Healthcare Brew. March 12, 2026. https://www.healthcare-brew.com/stories/2026/03/12/microsoft-launches-ai-platform-copilot-health

- HealthEx. HealthEx partners with Microsoft to bring consumers’ personal health history to Copilot Health [press release]. Globe Newswire. March 12, 2026. https://www.globenewswire.com/news-release/2026/03/12/3254936/0/en/HealthEx-Partners-with-Microsoft-to-Bring-Consumers-Personal-Health-History-to-Copilot-Health.html

- Olsen E. Microsoft launches dedicated health AI chatbot. Healthcare Dive. March 12, 2026. https://www.healthcaredive.com/news/microsoft-copilot-health-chatbot/814514/

- Shepardson D. Microsoft’s Copilot Health competes with OpenAI, Amazon. Axios. March 12, 2026. https://www.axios.com/2026/03/12/microsoft-copilot-health

- Pivot Point Consulting. Microsoft Rural Health Resiliency Program and Pivot Point Consulting drive AI-enabled innovation by bringing Dragon Copilot to rural hospitals nationwide [press release]. PR Newswire. March 3, 2026. https://www.prnewswire.com/news-releases/microsoft-rural-health-resiliency-program-and-pivot-point-consulting-drive-aienabled-innovation-by-bringing-dragon-copilot-to-rural-hospitals-nationwide-302702031.html

- Mahoney J, Feurstein-Simon R, Lu H, et al. FDA “cuts red tape” on clinical decision support software and wearable products for general wellness. Arnold & Porter. January 2026. https://www.arnoldporter.com/en/perspectives/advisories/2026/01/fda-cuts-red-tape-on-clinical-decision-support-software

- US Department of Health and Human Services. The access right, health apps, and APIs. HHS.gov. https://www.hhs.gov/hipaa/for-professionals/privacy/guidance/access-right-health-apps-apis/index.html

- Cassidy B. Health Information Privacy Reform Act, S. 3097, 119th Cong (2025). Inside Privacy. November 14, 2025. https://www.insideprivacy.com/health-privacy/u-s-senate-introduces-the-health-information-privacy-reform-act/

- Applied Policy. Critical Access Hospitals. October 2024. https://www.appliedpolicy.com/critical-access-hospitals/

- American Health Information Management Association. Health information workforce shortages persist as AI shows promise: AHIMA survey reveals [press release]. 2023. https://www.ahima.org/news-publications/press-room-press-releases/2023-press-releases/health-information-workforce-shortages-persist-as-ai-shows-promise-ahima-survey-reveals/